Artificial Intelligence and the Knowledge Based Curriculum

There’s so much to write about all the issues (putting it politely) with Erica Stanford’s education agenda. Maybe there’s something good in it, but it’s escaped my notice. In this article I will return to the centre point of her agenda, the so-called ‘knowledge based curriculum.’ I defy anyone to define this in a way that makes sense.

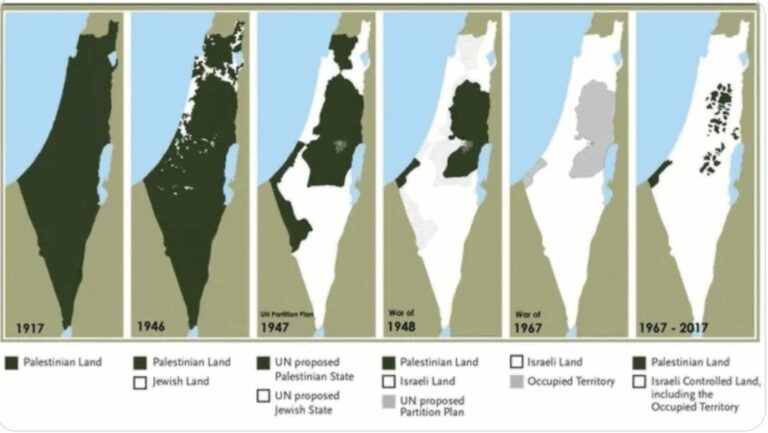

The huge elephant (mammoth?) in the room is the selection of what knowledge should be included, separated from all cultural, historical and political biases. What you would select could be vastly different from what I would select, which could be vastly different from what Māori educators select, which could be different from what Asian educators would select, and so on. All these competing versions of truth – please tell me me which version is correct and on what basis. I’ll wait.

There are, of course, core principles in Science and Mathematics, but outside of that, everything is subject to change and interpretation. English grammar rules are different now from what they were a century ago. History – always subjective. Whose history? When? Why?

Science is not a fixed body of knowledge either – a century ago it was believed that the Milky Way was the whole universe, that this was all there was, until an astronomer called Edwin Hubble turned the astronomical world upside by proving there were uncountable numbers of other galaxies, and, even worse, they were moving away from each other, the further away they were, the faster they were moving. So all the children who were taught, prior to this, about the Milky Way being all there was, suddenly found they’d been learning a falsehood. We are only one inspired scientist away from similar revelations in any field of science. And don’t get me started about the way Einstein upended the scientific world…

So what is the alternative? Quite simply, students need to learn how to learn for themselves. Existing ‘knowledge’ can be the foundation for this, but limiting learning to existing knowledge merely serves to limit their futures. Sadly as can be seen by the government, and especially the nutters in our society, who seem to be migrating to NZ First, this inability to learn for themselves has major consequences, both for them and for our society.

The developing influence of so-called artificial intelligences is already having a major influence on student learning, and this will increasingly be so, thus putting the knowledge curriculum people even further out of step.

Recently Bevan Holdaway addressed this in an article.

Artificial Intelligence is the Floor

“Or, what is possible in classrooms now?”

As usual I will highlight and discuss selections; however please read the full article for yourself.

‘A student walks in. You set work. They plug it into AI and that becomes their answer. This is what is happening now.

If that’s the only thing that counts as learning — if that’s the only thing we can see and measure — then the question isn’t how to ban it. The question is: what else needs to happen in this room?’

The use and impact of AI in the classroom is completely out of my experience, it wasn’t a thing when I retired. This just goes to show that the rate of change is beyond people’s ability to predict, something which seems to have totally bypassed the educational dinosaurs influencing, and including, Erica Stanford.

‘I was a denier for a long time. I objected to AI in my classroom, worried about inaccuracy, about cognitive cost, about the slow disappearance of struggle. But you can’t unsee what’s already visible. A 16-year-old can generate an essay that sounds like understanding. The moment they realise they can, knowledge becomes cheap.

And here’s what that reveals: knowledge was always going to become cheap. AI just accelerated what was already happening. Schools built on knowledge delivery were always going to become obsolete the moment delivery became automated and contextual.’

Exactly. This was already trending with the development of the internet, when the answer to anything could be found by ‘googling’’ it (not that anyone with any brains should be using Google for anything, unless they like being spied on and having all their data collected for sale to advertising companies, or having all their Gmail and Google drive documents scanned by Google’s AI application…).

‘I don’t judge the student for using AI that way. The ambient air of schooling teaches them the goal is output — the essay, the summary, the correct answer, the test. Of course they use the tool. Why would they choose struggle when the struggle has been positioned as a means to an end, and the end can now be achieved instantly? And especially when the things they’re being asked to struggle over are of little relevance to them. To be honest, I’d do the same thing if I was their age.’

We’ve always had an education system focused on output, on producing written work that is merely a reworking of existing knowledge ( the copy and paste problem for example), and AI has taken this to a new level by actually doing the writing as well. In the AI world, what does it matter that students learn facts about 19th century England (as promoted by Professor Elizabeth Rata)?

‘At the moment, schools send the message that the only place they can find themselves in within knowledge deemed important by others.

Knowledge can be commodified, packaged, plugged into a machine. That’s what makes it such a good fit for AI. But there is other work — the relational work, the imaginative work. That work is learning too, and it can’t be automated. It can only happen within and between people.’

And this is what is lacking in the knowledge based curriculum. This criticism isn’t new, it’s been made by many educational visionaries over many decades. AI just takes this criticism to a new level.

‘And yet we’ve been systematically draining schools of the space for it.

Cutting time for the arts. Treating culture as content to be delivered rather than a living way of knowing. Squeezing out conversation, play, the slow work of paying attention to each other and to place. Because none of it fits neatly into a measurable, assessable, reportable system.’

Quite. One of Stanford’s education planks is the introduction of a nationwide assessment system called SMART (to be discussed in a future article). This follows on from previous National led governments’ efforts to standardise assessment practices, and, as Bevan highlights, all of these require so-called learning and ‘achievement’ to be standardised and measurable. No place there for anything other than bullet point outcomes, predetermined, rather than taking account of individual differences in learning.

‘But, with AI becoming more and more pervasive, more part of the way learners go about things, the question we must ask now is: what if that squeeze was a mistake? And, what if AI is actually giving us the clarity we needed to see what matters in education, and the opportunity to realise it?’

The alternative way ahead:

T’he educators who grasp learning as relational — as something that happens within and between people, not to people — they’ve been building toward a different vision all along: not learning as information transfer. Learning as genuine encounter. As thinking together.’

Further,

‘And an education system built on this — built on genuine relationship, on seeing knowledge as alive, on honouring the knowing that’s already here — that system becomes more necessary, not less, in an AI world because it’s oriented toward what can’t be packaged and automated.’

Recently Claire Amos went to a Deeper Learning summit in the USA, where educators presented a range of different approaches to education needs. She wrote about this in this article

In this article she discusses the four AIs. I recommend you go and check these out for yourself, as doing so here would make this article too long. Bevan also comments on them in his article.

‘Four ways of naming what actually matters when knowledge stops being the thing.

When you see it that way, the schools that won’t become obsolete are the ones where something genuinely relational is happening. Where a student feels trusted to think out loud. Where a teacher knows them, really knows them, not as a set of data points but as a person. Where what they’re learning about is tethered to their place, their community, their living world. Where imagination is treated as foundational, not optional.

Where knowledge isn’t just information to be transferred, but something alive — something that connects them to others, to where they come from, to what they’re responsible for.

These schools will be needed more, not less, in a world where knowledge can be summonsed at a click’.

There is so much wisdom here.

Bevan concludes:

‘When a student walks in and plugs their work into AI, that’s not a failure of the teacher or the school or the student. It’s a signal. It’s telling us something we needed to hear: that the work we were defending wasn’t worth defending. And that the work that actually matters — the relational, the imaginative, the human-to-human — that work is waiting. That, I think, is what we need to turn towards.’

The gulf between Erica’s knowledge based curriculum and the actual world of our students is immense and rapidly growing wider.

There’s an old education cliche “Educating Learners for Their Future, Not Our Past”. The new world of AI gives this even more power.

This is something way beyond the ideologues in Wellington, under the command of Erica Stanford, which in turn is way beyond the ideologues in the New Zealand Initiative and the Atlas Network, all of whom are desperately erecting an educational dyke (and hastily plugging holes) to keep the real world from impinging on their faded and outdated world views. Ultimately in the end this is a desperate battle to maintain white, eurocentric hegemony of our children’s learning.

The battle is lost. It’s time to wake up and accept the new reality.